Real-time Breathing System for Virtual Characters

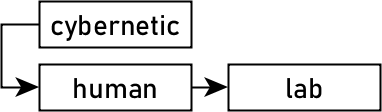

Human speech production requires the dynamic regulation of air through the vocal system. While virtual character systems commonly are capable of speech output, they rarely take breathing during speaking (speech breathing) into account. We believe that integrating dynamic speech breathing systems in virtual characters can significantly contribute to augmenting their realism. In this project we develop a novel control architecture aimed at generating speech breathing in virtual characters. The architecture is informed by behavioral, linguistic and anatomical knowledge of human speech breathing. Based on textual input and controlled by a set of low- and high-level parameters, the system produces dynamic signals in real-time that control the virtual character's anatomy (thorax, abdomen, head, nostrils, and mouth) and sound production (speech and breathing). The system is implemented in Python, offers a graphical user interface for easy parameter control, and simultaneously controls the visual and auditory aspects of speech breathing through the integration of the character animation system SmartBody and the audio synthesis platform SuperCollider. Beyond contributing to realism, the presented system allows for a flexible generation of a wide range of speech breathing behaviors that can convey information about the speaker such as mood, age, and health.

References

Speech Breathing in Virtual Humans{\:} An Interactive Model and Empirical Study. Ulysses Bernardet, Sin-Hwa Kanq, Andrew Feng, Steve DiPaola, and Ari Shapiro. In 2019 IEEE Virtual Humans and Crowds for Immersive Environments (VHCIE), pages 1--9. IEEE, IEEE, mar 2019. [ bib | DOI | http]A Dynamic Speech Breathing System for Virtual Characters. Ulysses Bernardet, Sin-hwa Kang, Andrew Feng, Steve DiPaola, and Ari Shapiro. In Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics), volume 10498 LNAI, pages 43--52. 2017. [ bib | DOI | http ]